- Home

- About

- Contact

- Blog

- Binary cross entropy keras

- Realflight dongle emulator g6

- How much is sony vegas pro 11

- Adobe bridge cs6 all my export presets disappeared

- Juicer 3 apples

- Remove adobe acrobat pro activation

- Totem tribe gold pyramid

- Corel draw 11 free

- List of prime numbers to 150

- Tiranga song peele peele

- Sdl trados studio 2014 free trial

- Jarvis mark 3 download for android

- Boris fx video effects

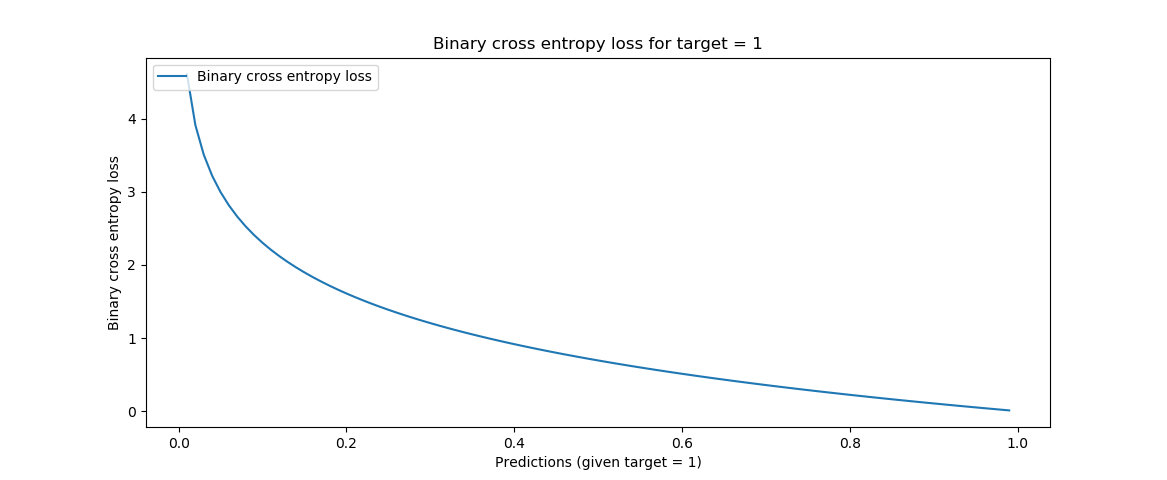

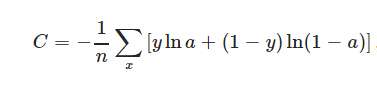

If tf image_dim_ordering is used then you can do way with the Permute layers. Softmax_matrix = sigmoided_matrix / K.sum(sigmoided_matrix, axis=0)Īnd use it as a lambda layer: model.add(Deconvolution2D(7, 1, 1, border_mode='same', output_shape=(7,n_rows,n_cols))) (10.2) i 1 10.4.1.2 Binary Cross Entropy Binary cross entropy is a logarithmic loss. The other way of doing so will be to implement depth-softmax : def depth_softmax(matrix): Mean squared error has a built-in implementation in Keras with. The data should also be reshaped accordingly. Then you can added the softmax activation layer and use crossentopy loss.

#BINARY CROSS ENTROPY KERAS WINDOWS#

Open up some folder in your File Explorer (whether Apple, Windows or Linux I just don’t know all the names of the explorers in the different OSes) and create a file called binary-cross-entropy.py. The easiest way to go about it is permute the dimensions to (n_rows,n_cols,7) using Permute layer and then reshape it to (n_rows*n_cols,7) using Reshape layer. Let’s now create the Keras model using binary crossentropy. Loss Function Reference for Keras & PyTorch. Suppose that our model predicts p while the target is c, then the binary cross-entropy is. Now the problem is using the softmax in your case as Keras don't support softmax on each pixel. Focal loss is a variant of Binary cross-entropy which down-weights well-classified examples and enables the. binarycrossentropy, which defines the binary logarithmic loss.

#BINARY CROSS ENTROPY KERAS CODE#

Now to enforce the prior of one-hot code is to use softmax activation with categorical cross-entropy. But as mis-predictions are severely penalizing the model somewhat learns to classify properly. It does not take into account that the output is a one-hot coded and the sum of the predictions should be 1. It is a binary classification task where the output of the model is a single number range from 0~1 where the lower value indicates the image is more "Cat" like, and higher value if the model thing the image is more "Dog" like.Binary cross-entropy loss should be used with sigmod activation in the last layer and it severely penalizes opposite predictions. class Binar圜rossentropy : Computes the cross-entropy loss between true labels and predicted labels. FOR COMPILING pile(loss'binarycrossentropy', optimizer'sgd') optimizer can be substituted for another one FOR EVALUATING. if you mistakenly select binary cross-entropy as your loss function when ytrue is encoded one-hot and do not specify a. Entropy is the measure of uncertainty in a certain distribution, and cross-entropy is the value representing the uncertainty between the target distribution and the predicted distribution. This competition on Kaggle is where you write an algorithm to classify whether images contain either a dog or a cat. In Keras, these three Cross-Entropy functions expect two inputs. You can either pass the name of an existing metric, or pass a Theano. A metric function is similar to an objective function, except that the results from evaluating a metric are not used when training the model. Metric functions are to be supplied in the metrics parameter when a model is compiled.

Predict house price(an integer/float point)Įngine health assessment where 0 is broken, 1 is new A metric is a function that is used to judge the performance of your model.

Arguments: posweight: A coefficient to use on the positive.

News tags classification, one blog can have multiple tags Just used tf.nn.weightedcrossentropywithlogits instead of tf.nn.sigmoidcrossentropywithlogits with input posweight in calculation: import tensorflow as tf: from keras import backend as K ''' Weighted binary crossentropy between an output tensor and a target tensor. MNIST has 10 classes single label (one prediction is one digit) Last-layer activation and loss function combinations